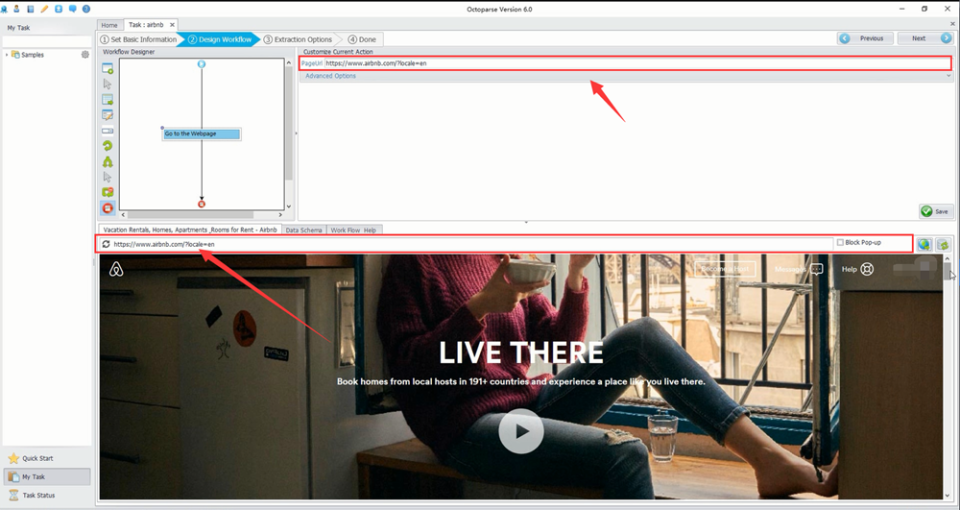

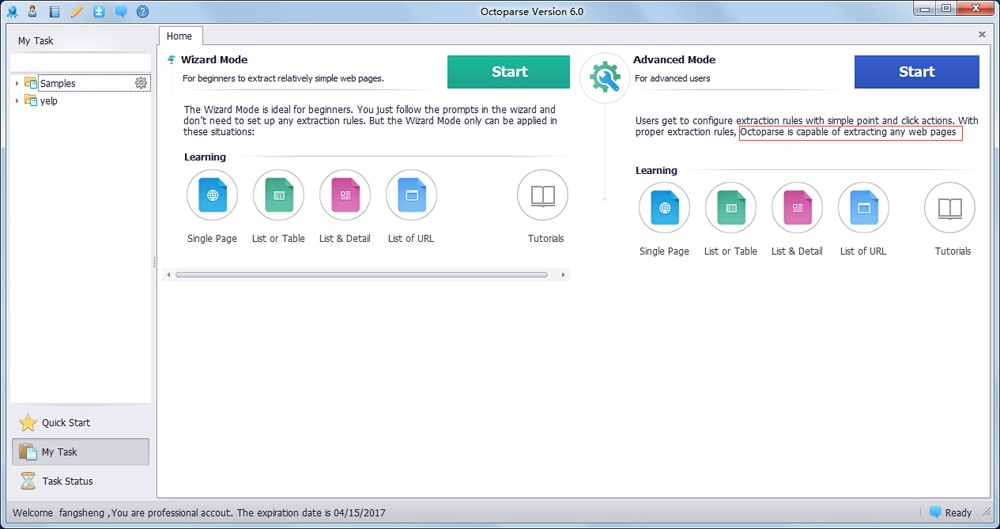

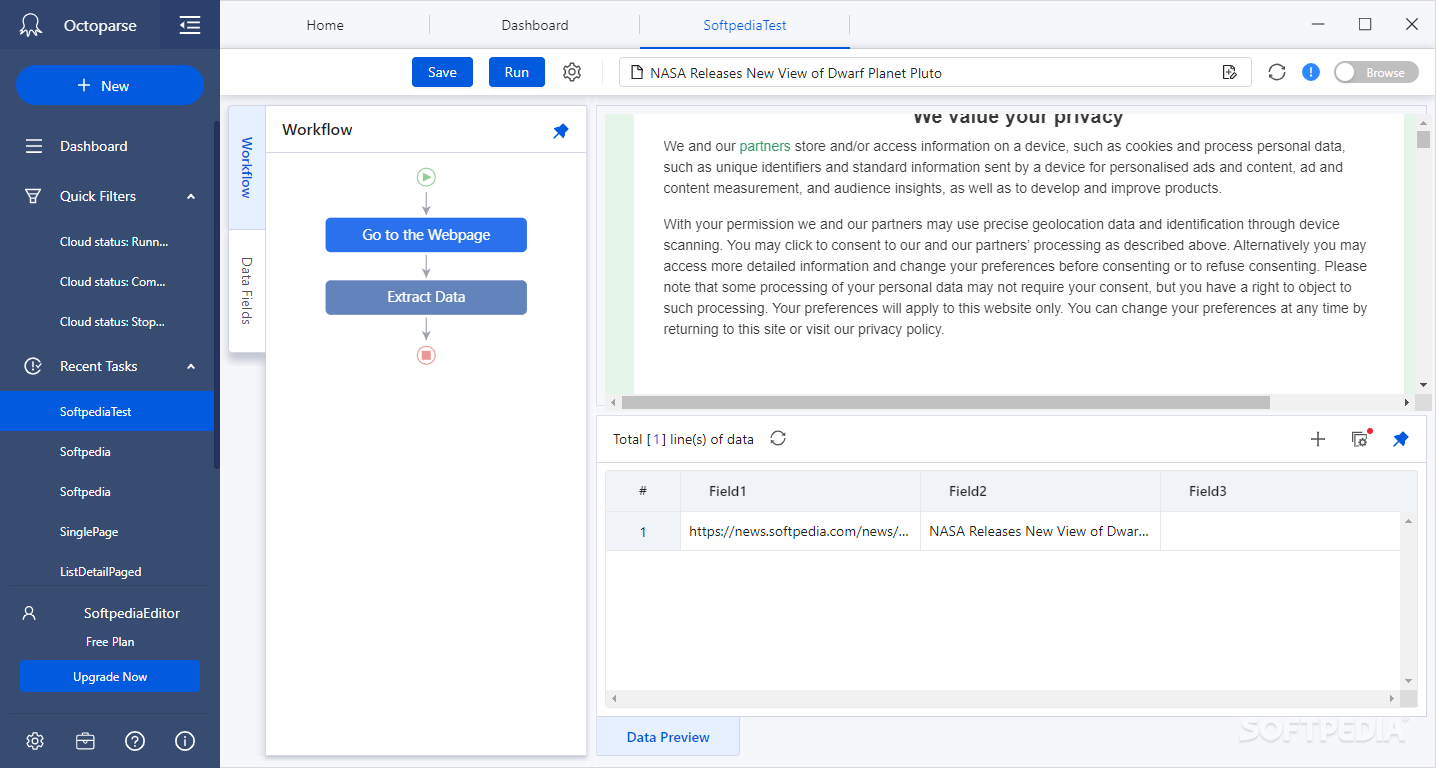

However, simply installing the software or data mining tool that suits your needs is not enough. Other offline tools are also available, and many of them are designed to be very simple to use. At the same time, you get the assistance of data scientists when you do submit a mining request. Since you don’t have to set up your own data pools or configure a cloud cluster for mining purposes, you can bypass the entire getting-started phase and begin collecting data immediately. Simplicity is the real advantage of using Octoparse. Some of the biggest names in the business, including iResearch and Wayfair, are using Octoparse for their data needs. The service is also handy for when you need to monitor certain data points, but you don’t want to dedicate resources to completing that task regularly. Octoparse can be used for one-time data collections as well as long-term runtimes that require updates and remining. Data scientists working behind the scene will make sure that you get the best data for your specific needs. All you need to do with Octoparse is specify the kind of data mining job you want to run by filling out the request form. Yes, you don’t need to set up your own mining environment or pay for a dedicated cloud cluster to start collecting data. In fact, no setup is required at all because Octoparse is also being offered as managed data mining and parsing services. Octoparse is another handy tool to use if you want to mine data from public sources without the usual complex steps of setting up your own crawler. The same is true for the ability to use API calls and web hooks for more advanced runtimes. Support for RegEx and CSS selectors, for example, is a great way to fine-tune your data mining routine on specific sites. You will be surprised by how easy it is to configure automatic runs with this tool, regardless of how complex your data requirements are.Īt the same time, ParseHub supports advanced features that are geared more towards serious data enthusiasts and pro users. If you want to update your data pool periodically, this is the tool to use. ParseHub supports scheduled runs and automatic IP rotation. It is also compatible with tables and maps, expanding your data mining capabilities even further. For starters, you can have ParseHub collect data from interactive websites and sites that hide their raw data behind visual or JavaScript layers.

Some features provided by ParseHub certainly make data mining easier. There is no need to manually code a parser to work with the specific requirements that you have, either. I would have loved a higher priced premium plan but I'm not going to pay double or triple the base plan fee just to use it for a couple days a month.You can use ParseHub to get sales leads from social media pages or to find prices on multiple marketplaces. The boost feature is only in higher plans as well as the fact that there's no plans for someone who only does online inventory maybe once every few months.

I used a free tool which only collected maybe 1 fifth of the products and looking back if I would have found ScrapeStorm sooner it would have saved and actually generated my company much more orders since there were so many missing products and details. It scraped my supplier's products, including product name, price, even information that you could only get after clicking the link it added it to the excel spreadsheet wonderfully. My first impression was that it's just another scrape tool but I am extremely impressed I no longer have to manually get product details (like compatible printer models for our ink cartridges for example) which took 6-9 months to do! I am extremely impressed. Kommentare: So far I am extremely impressed.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed